The High Performance Online Platform 699989861 Guide presents a disciplined view of speed, reliability, and scale under variable load. It frames a measurable latency taxonomy, clear performance metrics, and resource-efficient patterns. Core architecture emphasizes modular boundaries, asynchronous messaging, and adaptive caching, guided by latency budgeting. Deployment and monitoring rely on cross-functional alignment, canary releases, and centralized dashboards. It invites a practical, data-driven discussion about trade-offs and next steps that keep stakeholders engaged without premature certainty.

What Defines a High Performance Online Platform 699989861

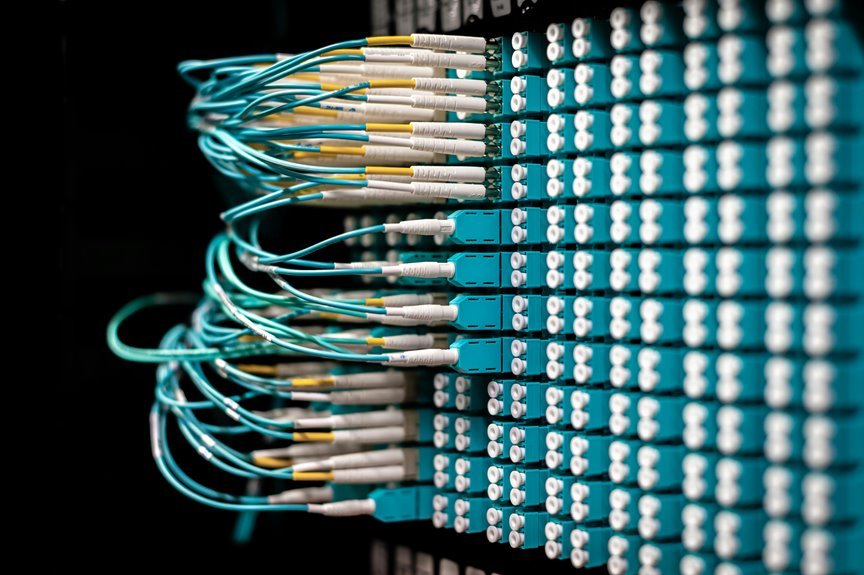

A high-performance online platform is defined by its ability to deliver scalable, reliable, and low-latency services under varying load conditions, while maintaining strong security and operational resilience.

The evaluation relies on latency taxonomy and quantifiable metrics, guiding decisions toward efficient resource utilization.

Collaborative teams test caching strategy effectiveness, monitor tail latencies, and align benchmarks with strategic freedom to innovate while preserving reliability and control.

Core Architecture Patterns for Speed, Reliability, and Scale

What core architecture patterns drive speed, reliability, and scale in high-performance online platforms, and how do they align with measurable outcomes? They include modular service boundaries, asynchronous messaging, and adaptive caching. Latency budgeting directs resource investment while traffic shaping preserves back-end resilience. The approach is data-driven, strategic, collaborative, and freedom-oriented, emphasizing measurable outcomes, cross-team alignment, and scalable, low-friction decision making.

Practical Deployment and Monitoring Workflows That Work

How can deployment and monitoring workflows be structured to sustain speed, reliability, and scale in practice? The analysis shows cross-functional teams aligning metrics, automation, and observability. Distributed caching, edge routing, and distributed tracing enable fast feedback loops. Canary deployments minimize risk, while centralized dashboards track health signals. Documentation, rehearsed runbooks, and post-incident reviews sustain continuous improvement and collective ownership.

Tuning Techniques to Squeeze Latency and Boost Throughput

To push latency downward and throughput upward, the approach combines empirical measurements with targeted adjustments across the stack. Systematic latency profiling informs where bottlenecks reside, guiding precise optimizations. Cross-functional teams align on throughput budgeting, balancing resource allocation with demand. Quantitative experiments, reproducible benchmarks, and continuous feedback enable disciplined, collaborative improvement without compromising freedom or resilience.

Conclusion

In parsing the platform’s blueprint, coincidence surfaces in the alignment of metrics, boundaries, and teams, suggesting an emergent harmony between design and operation. Data reveals that modular boundaries, asynchronous messaging, and adaptive caching coincide with predictable latency budgets and scalable throughput. Strategically, cross-functional collaboration and canary-driven risk assessment align with dashboards and runbooks, reinforcing resilient delivery. The result is a collaborative, data-driven trajectory where every incident or benchmark nudges improvement along a shared, measurable path.